Dean DeBiase is a best-selling author and Forbes Contributor reporting on how global leaders and CEOs are rebooting everything from growth, innovation, and technology to talent, culture, competitiveness, and governance across industries and societies.

Why Quantum Computing Could Take AI To The Next Level After Nvidia GTC

By Dean DeBiase

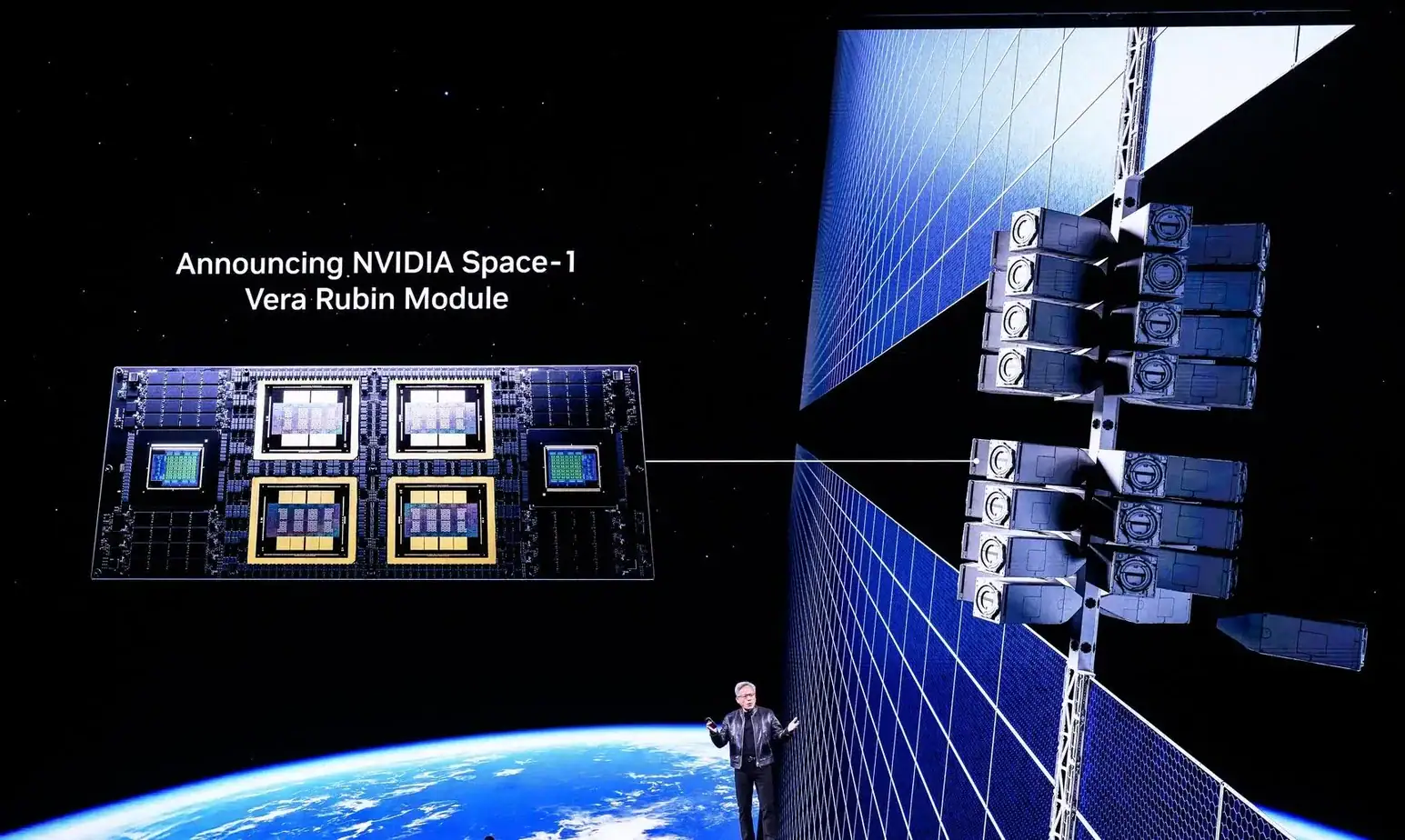

While some folks obsess over AI hype and a possible bubble, the Nvidia GTC conference this week debuted significant developments to the C-Suite leaders who flocked to San Jose. Nvidia founder and CEO Jensen Huang made it clear that quantum computing, among other technologies, will be an important part of the next generation of computing ecosystems and platforms.

I can’t blame some for being skeptical. After all, quantum computing advantage (the milestone where it can solve extremely complex problems significantly faster, more efficiently, or more accurately than classical supercomputers) always seems to be five years away. Technology breakthroughs have been about the how and when. What once took up the entire space of IBM’s early computer lab now fits on a chip. The question is how and when will the world develop quantum on a chip.

But given Huang’s GTC pronouncements, and the emergence of a potential game changer called neutral atom quantum computing, I think it is worth taking a closer look at the state of play in quantum and how/when it can help advance not just AI and data centers, but industries and societies.

GTC Ecosystems Setting HPC Evolution Stage

It’s hard to overstate the importance of Nvidia and its GTC conference. What began in 2009 as a geek fest about GPUs has grown alongside Nvidia’s rise as the dominant chip force behind the AI boom. Today the event has effectively become the annual summit for accelerated computing, where the world hangs on what Huang keynotes — and what companies announce around it — as signs of where the next generation of computing is headed.

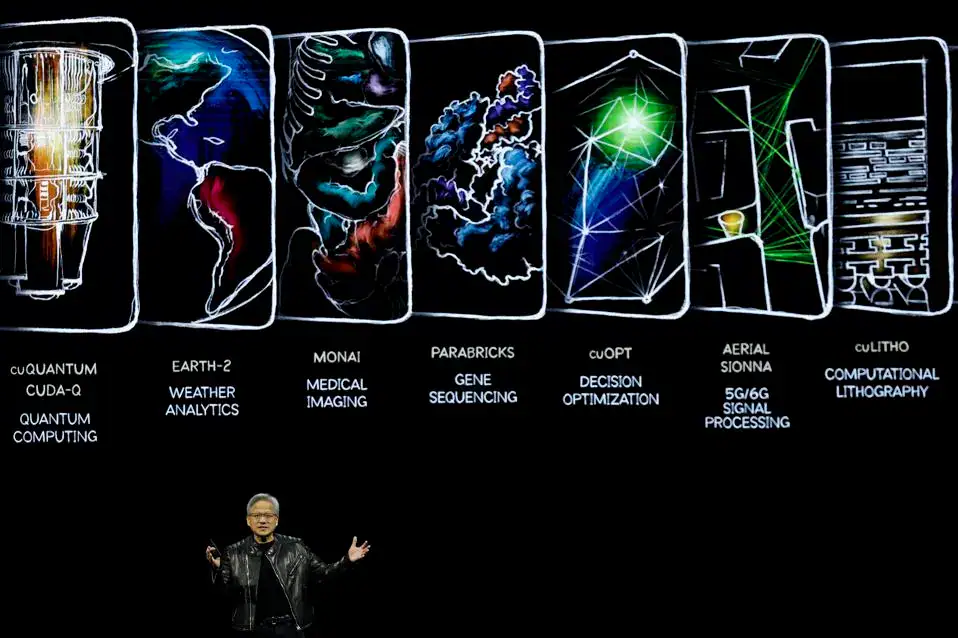

This year’s message was clear: even as GPU-powered AI supercomputers scale rapidly, the scientific problems researchers want to solve are growing even faster. Fields such as materials science, drug discovery, and climate modeling push classical systems to their limits. Nvidia itself is preparing for a future where quantum processors augment classical HPC systems.

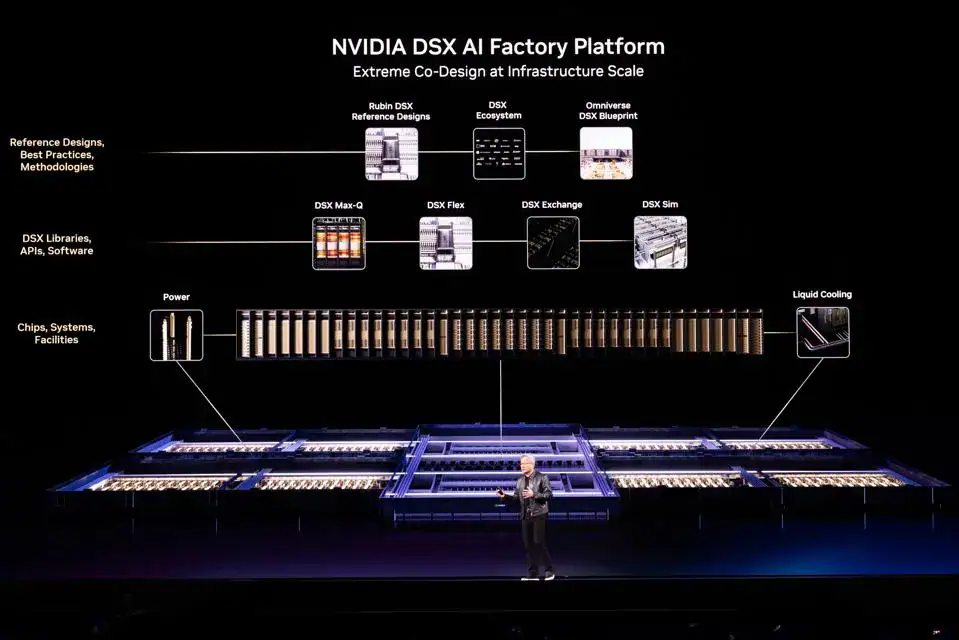

They increasingly frame the data center not as a collection of standalone chips, but as an integrated accelerator platform built around full systems, networking, efficiency, AI-factory economics, and power. Speaking of power, in my recent interview with Dan Brollette, former U.S. Department of Energy Secretary we unpacked the ramifications of unbridled data center growth on power grids across the world.

Nvidia’s vision of a platform future creates a much clearer architectural role for quantum processing units (QPUs) inside future HPC and data-center environments. The company’s current messaging reinforces the idea that next-generation compute infrastructure will combine multiple accelerator types rather than rely on CPUs and GPUs alone. As Huang put it, “In the future, supercomputers will be quantum-GPU systems — combining the quantum computer’s ability to simulate nature and the GPU’s programmability and massive parallelism.”

Too heavy? In other words, GPUs aren’t going away. But the most demanding scientific workloads may ultimately require new computing paradigms working alongside classical supercomputers — and quantum computing is increasingly seen as one of the most promising.

Optical “Tweezers” That Can Grasp Individual Atoms

Most agree that quantum computing will complement classical computing, not replace it. But what kind will prevail?

There’s a horse race between varieties and vendors piling on qubits (the fundamental unit of information that’s analogous to a classical bit) but never quite scaling to undeniably reach quantum advantage.

Let me explain, because it is easy to get lost in the very deep weeds of this field. Quantum computing taps strange quantum physics properties to process information in ways classical computers cannot. E.g., the qubits I just mentioned can hold multiple states at the same time to solve certain problems – especially complex optimization, molecular simulation, and materials science – much more efficiently.

Superconducting qubits, used by companies like IBM and Google operate at extremely low temperatures and face challenges with noise, error rates, and scaling large systems. Trapped-ion systems (e.g., from IonQ) use electrically confined atoms as qubits. Photonic approaches manipulate particles of light. Each of these technologies has made important progress, but their limitations have opened the door for alternative architecture.

Enter neutral atom computing, which I learned of recently when I spoke with Dr. Alexander Glätzle the CEO and co-founder of Planqc, a German company that was spun out from the Max Planck Institute of Quantum Optics.

As he explained, these QCs use individual atoms trapped and controlled with lasers as qubits. They employ optical “tweezers” that can grasp and arrange individual atoms. The architecture scales well and is less costly to build than other kinds of QC. It does not require supercooling.

Planqc is leading a $23M project to build a 1,000-qubit neutral-atom quantum computer for the Leibniz Supercomputing Centre (LRZ), one of Germany’s three national supercomputing centers. This system will integrate into the supercomputing environment as a quantum co-processor. The initiative follows earlier contracts to develop scalable neutral-atom systems, and a $50M A round to accelerate development of industry-scale quantum processors.

Prof. Dr. Dieter Kranzlmüller, Chairman of the Board of Directors at LRZ, said “We’re excited to bring their neutral atom system into LRZ and to work together to merge its unique capabilities into our integrated High-Performance Computing and Quantum Computing (HPCQC) environment for the benefit of our users’ research. The potential wins for next-generation hybrid computing are many.”

Dr. Glätzle explained further, regarding the potential for data centers and HPC: “We see quantum computing becoming part of the future computing landscape in more ways than one. In some settings, quantum processors will be tightly integrated into hybrid HPC and data-center environments, working alongside classical systems and accelerators on the hardest computational tasks. In other settings, users will access quantum computing more directly through dedicated systems and quantum-computing-as-a-service. What matters now is that some in the industry are beginning to prepare for that future. In our view, neutral atoms are especially compelling because they combine a credible path to scale with a practical infrastructure profile. That opens up both routes: integration into larger compute environments and the standalone deployment of powerful quantum systems.”

The Next Levels Of AI Need Quantum

During my recent interview with Andrew McLaughlin, COO of Google spinout Sandbox AQ, we discussed how they use quantum science principles to advance AI and solve the world’s toughest quantitative problems, big problems, the kind where LLMs fall short.

Based on NSF projects and ventures I have been advising, I know quantum computers have a long way to go before you start seeing them in your local data center. But, even though they require more optimization into slimmed down, commercially efficient platforms, like the evolution we are seeing in small modular reactors (SMRs), they are coming.

Like most tends and transitions, smart leaders are helping lead the way—from platforms like Nvidia’s NVQLink, enabling early testing and bridging toward “AI-Quantum factories” to hyperscalers and data center providers.

Zulfi Ala, Microsoft’s Corporate VP of Quantum, told CNBC, “By the end of the decade, we are confident that we will have machines in data centers that have commercial value.” A bit bullish? Perhaps.

I also spoke with a few other providers to get their take on preparing for a QC future. Simon Tusha, Founder and CTO of TECfusions, a specialized data center infrastructure provider, said “We didn’t have to pivot for the rise of AI. I created the company with sustainability and innovation at front of mind. From day one, our focus on power generation and efficient cooling designs positioned us to meet the intensity and scale AI demands. And, as the industry looks ahead to quantum, that same mindset, grounded in creativity, speed-to-market, and deep technical expertise, will ensure we’re not just ready for what’s next, but leading the way.”

Joe Teegarden, founder of RWS, a 100MW data center rollup added, “We have designed and developed flexible modular systems and architectures that are faster and more efficient to deploy, like containerized platforms that provide on-demand, closer-to-client capabilities. Partnering with industry leaders, we have built data centers that perform today’s heavy CPU and GPU workloads, that can migrate to what’s next, including quantum.”

There are a lot of moving parts that will need to align to seize this historic technology era. The when is now. I call on industry, institutional, and government leaders—many with competing agendas—to come together and figure out how curate and accelerate the ecosystems needed to create the next generation of compute that will once again change the world.